what to do on a multiple choice test

Writing Proficient Multiple Choice Test Questions

Cite this guide: Brame, C. (2013) Writing good multiple choice test questions. Retrieved [todaysdate] from https://cft.vanderbilt.edu/guides-sub-pages/writing-good-multiple-pick-test-questions/.

- Constructing an Effective Stalk

- Constructing Effective Alternatives

- Additional Guidelines for Multiple Choice Questions

- Considerations for Writing Multiple Selection Items that Test College-order Thinking

- Additional Resources

Multiple choice examination questions, also known every bit items, tin can be an constructive and efficient way to assess learning outcomes. Multiple choice test items accept several potential advantages:

Versatility: Multiple choice test items can exist written to assess various levels of  learning outcomes, from basic recall to awarding, analysis, and evaluation. Because students are choosing from a set of potential answers, nonetheless, in that location are obvious limits on what can be tested with multiple choice items. For example, they are not an effective way to test students' ability to organize thoughts or clear explanations or creative ideas.

learning outcomes, from basic recall to awarding, analysis, and evaluation. Because students are choosing from a set of potential answers, nonetheless, in that location are obvious limits on what can be tested with multiple choice items. For example, they are not an effective way to test students' ability to organize thoughts or clear explanations or creative ideas.

Reliability: Reliability is defined as the degree to which a test consistently measures a learning outcome. Multiple choice examination items are less susceptible to guessing than true/false questions, making them a more reliable means of assessment. The reliability is enhanced when the number of MC items focused on a single learning objective is increased. In add-on, the objective scoring associated with multiple choice test items frees them from bug with scorer inconsistency that can plague scoring of essay questions.

Validity: Validity is the degree to which a test measures the learning outcomes it purports to measure. Because students tin typically answer a multiple choice particular much more than quickly than an essay question, tests based on multiple choice items can typically focus on a relatively broad representation of course fabric, thus increasing the validity of the assessment.

The key to taking advantage of these strengths, withal, is construction of adept multiple option items.

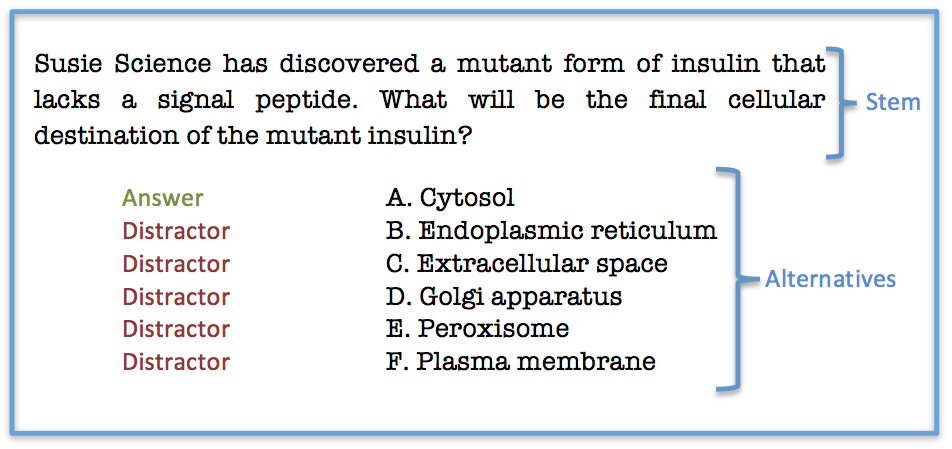

A multiple choice particular consists of a problem, known every bit the stem, and a list of suggested solutions, known as alternatives. The alternatives consist of one correct or best culling, which is the respond, and wrong or junior alternatives, known as distractors.

Amalgam an Constructive Stalk

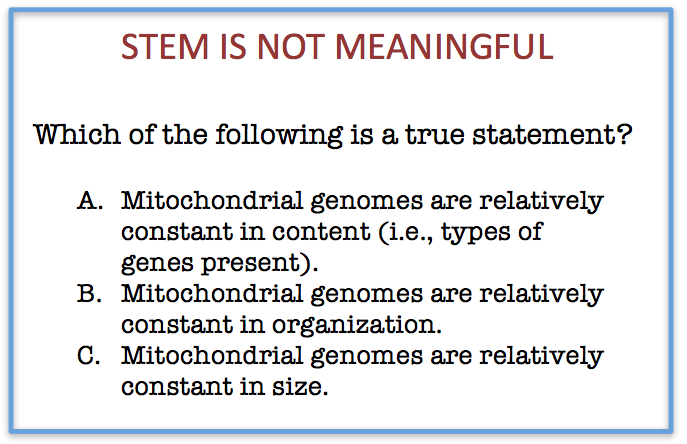

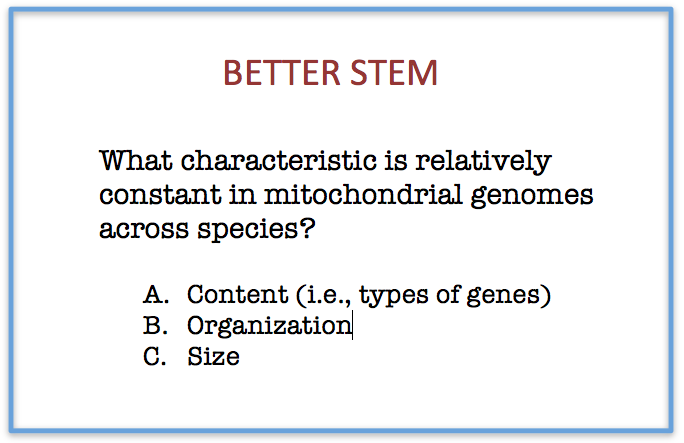

1. The stem should be meaningful past itself and should present a definite problem. A stalk that presents a definite trouble allows a focus on the learning event. A stem that does non nowadays a clear problem, however, may examination students' power to depict inferences from vague descriptions rather serving every bit a more directly test of students' achievement of the learning result.

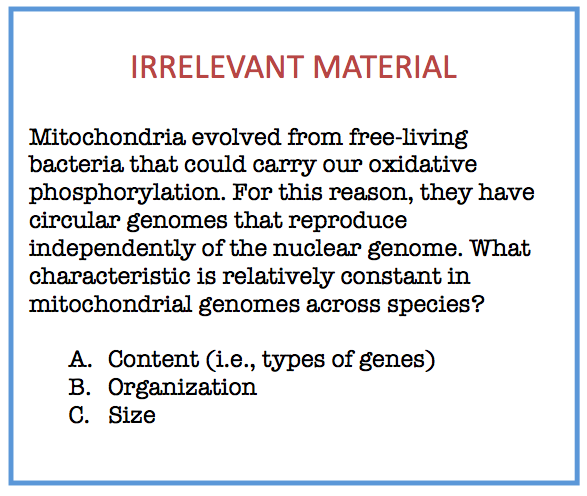

2. The stem should not contain irrelevant material, which can decrease the reliability and the validity of the examination scores (Haldyna and Downing 1989).

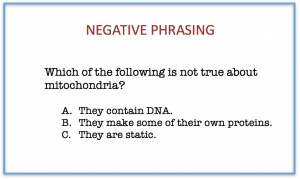

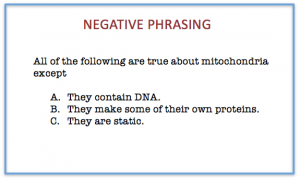

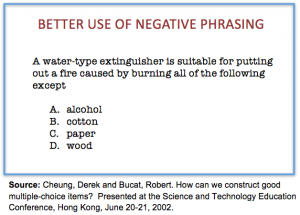

three. The stalk should exist negatively stated only when significant learning outcomes crave it. Students often have difficulty understanding items with negative phrasing (Rodriguez 1997). If a significant learning issue requires negative phrasing, such as identification of dangerous laboratory or clinical practices, the negative element should be emphasized with italics or capitalization.

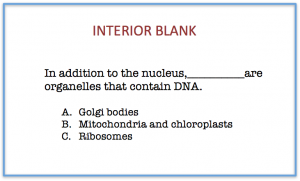

four. The stem should be a question or a partial judgement. A question stem is preferable because it allows the student to focus on answering the question rather than property the partial judgement in working retention and sequentially completing it with each culling (Statman 1988). The cognitive load is increased when the stem is synthetic with an initial or interior bare, so this construction should be avoided.

Constructing Constructive Alternatives

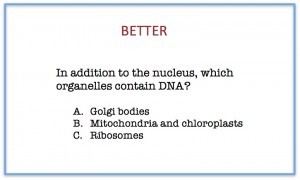

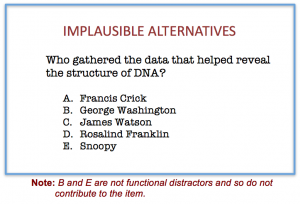

ane. All alternatives should exist plausible. The function of the incorrect alternatives is to serve as distractors,which should exist selected past students who did not accomplish the learning issue only ignored by students who did achieve the learning outcome. Alternatives that are implausible don't serve as functional distractors and thus should not be used. Mutual educatee errors provide the best source of distractors.

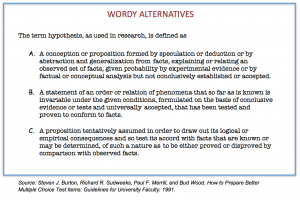

two. Alternatives should be stated conspicuously and concisely. Items that are excessively wordy assess students' reading ability rather than their attainment of the learning objective

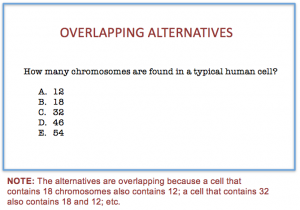

three. Alternatives should exist mutually sectional. Alternatives with overlapping content may be considered "flim-flam" items by test-takers, excessive use of which can erode trust and respect for the testing process.

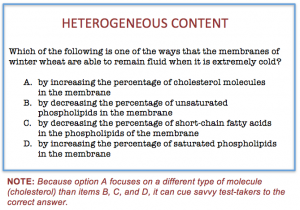

iv. Alternatives should be homogenous in content. Alternatives that are heterogeneous in content can provide cues to pupil about the right answer.

5. Alternatives should exist free from clues virtually which response is right. Sophisticated test-takers are alert to inadvertent clues to the correct respond, such differences in grammar, length, formatting, and language choice in the alternatives. It'south therefore important that alternatives

- have grammar consistent with the stem.

- are parallel in grade.

- are similar in length.

- employ similar language (eastward.g., all dissimilar textbook linguistic communication or all like textbook language).

6. The alternatives "all of the above" and "none of the above" should non be used. When "all of the above" is used equally an answer, examination-takers who tin place more than one alternative as correct can select the correct answer fifty-fifty if unsure about other culling(s). When "none of the above" is used equally an alternative, test-takers who can eliminate a single option can thereby eliminate a second option. In either instance, students tin use fractional knowledge to get in at a correct answer.

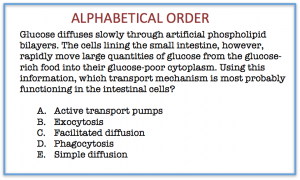

7. The alternatives should exist presented in a logical order (e.thou., alphabetical or numerical) to avoid a bias toward certain positions.

viii. The number of alternatives can vary among items equally long as all alternatives are plausible. Plausible alternatives serve as functional distractors, which are those called past students that take not accomplished the objective but ignored by students that have achieved the objective. There is little divergence in difficulty, discrimination, and test score reliability among items containing two, iii, and four distractors.

Additional Guidelines

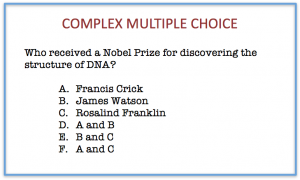

ane. Avert circuitous multiple option items , in which some or all of the alternatives consist of different combinations of options. Every bit with "all of the above" answers, a sophisticated test-taker tin can use partial knowledge to attain a correct answer.

ii. Keep the specific content of items independent of ane another. Savvy exam-takers can utilise information in i question to answer some other question, reducing the validity of the test.

Considerations for Writing Multiple Choice Items that Test Higher-order Thinking

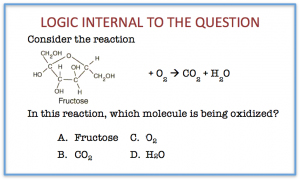

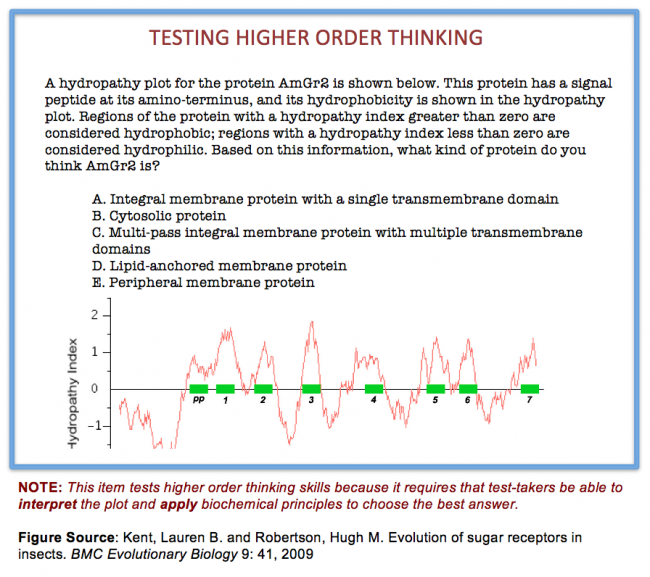

When writing multiple choice items to examination higher-club thinking, design questions that focus on higher levels of cognition every bit defined by Bloom's taxonomy. A stem that presents a problem that requires application of course principles, analysis of a problem, or evaluation of alternatives is focused on higher-club thinking and thus tests students' power to practise such thinking. In constructing multiple choice items to examination higher order thinking, it tin too be helpful to design problems that require multilogical thinking, where multilogical thinking is defined every bit "thinking that requires noesis of more than one fact to logically and systematically apply concepts to a …problem" (Morrison and Free, 2001, folio twenty). Finally, designing alternatives that crave a high level of discrimination tin can also contribute to multiple choice items that test higher-guild thinking.

Additional Resources

- Burton, Steven J., Sudweeks, Richard R., Merrill, Paul F., and Wood, Bud. How to Prepare Better Multiple Pick Exam Items: Guidelines for Academy Faculty, 1991.

- Cheung, Derek and Bucat, Robert. How can we construct adept multiple-choice items? Presented at the Science and Engineering science Education Briefing, Hong Kong, June 20-21, 2002.

- Haladyna, Thomas M. Developing and validating multiple-pick test items, 2nd edition. Lawrence Erlbaum Associates, 1999.

- Haladyna, Thomas M. and Downing, Due south. One thousand.. Validity of a taxonomy of multiple-selection particular-writing rules. Applied Measurement in Education, 2(1), 51-78, 1989.

- Morrison, Susan and Free, Kathleen. Writing multiple-choice test items that promote and measure out critical thinking. Journal of Nursing Education 40: 17-24, 2001.

This didactics guide is licensed under a Creative Commons Attribution-NonCommercial iv.0 International License.

Source: https://cft.vanderbilt.edu/guides-sub-pages/writing-good-multiple-choice-test-questions/

0 Response to "what to do on a multiple choice test"

Post a Comment